Kranti Kumar Parida 1,

Siddharth Srivastava2,

Gaurav Sharma1, 3

1IIT Kanpur, 2CDAC Noida, 3TensorTour Inc.

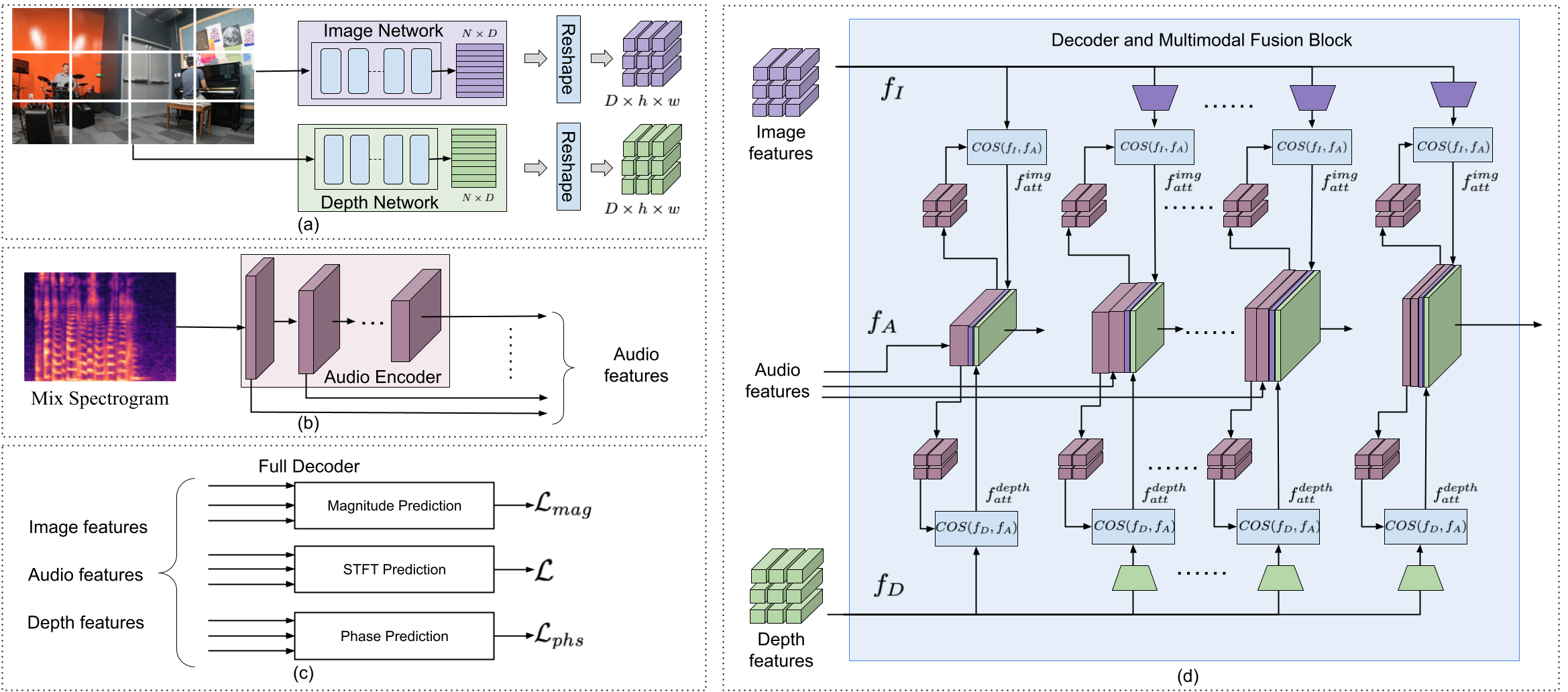

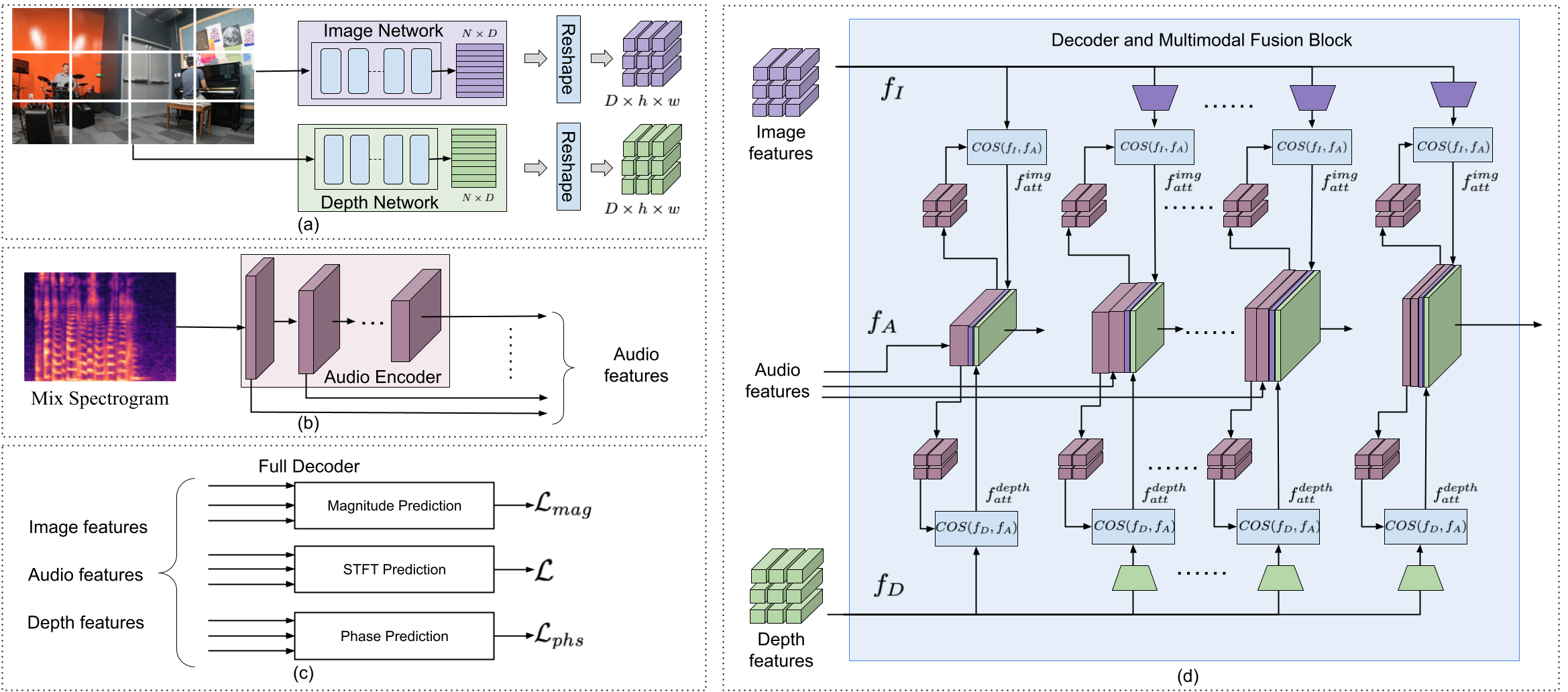

Binaural audio gives the listener an immersive experience and can especially enhance augmented and virtual reality. However, recording binaural audio requires specialized setup with a dummy human head with microphones in the left and right ears. Due to the difficulty in having and using such setup for recording, mono audio is the popular choice in usual devices. Researchers have tried to lift mono audio to binaural audio conditioning on the visual input from the scene. Such approaches have not used an important cue for the task: the distance of different sound producing objects from the microphones. In this work, we argue that depth map of the scene can act as a proxy for inducing distance information of different objects in the scene, for the task of audio binauralization. We propose a novel encoder-decoder architecture with a hierarchical attention mechanism to encode image, depth and audio feature jointly. We design the network on top of state-of-the-art transformer networks for image and depth representation. We show empirically that the proposed method outperforms state-of-the-art methods comfortably for two challenging public datasets FAIR-Play and MUSIC-Stereo. We also demonstrate with qualitative results that the method is able to focus on the right information required for the task.

If you use the code or dataset from the project, please cite:

@InProceedings{parida2022beyond,

author = {Parida, Kranti Kumar and Srivastava, Siddharth and Sharma, Gaurav},

title = {Beyond Mono to Binaural: Generating Binaural Audio from Mono Audio with Depth and Cross Modal Attention},

booktitle = {Proceedings of the IEEE/CVF conference on winter conference on application of computer vision},

year = {2022}

}